ABAP Environment Pipeline¶

The goal of the ABAP environment pipeline is to enable Continuous Integration for the SAP BTP, ABAP environment, also known as Steampunk. The pipeline contains several stages and supports different scenarios. The general idea is that the user can choose a subset of these stages, which fits her/his use case, for example running nightly ATC checks and AUnit tests or building an ABAP add-on for Steampunk.

Scenarios¶

The following scenarios are available.

Continuous Testing¶

This scenario is intended to be used improve the software quality through continuous checks and testing. Please refer to the scenario documentation for more information.

Building ABAP Add-ons for Steampunk¶

This scenario is intended for SAP partners, who want to offer a Software as a Service (SaaS) solution on Steampunk. This is currently the only use case for building ABAP Add-ons and, more specifically, the stages "Initial Checks", "Build", "Integration Tests", "Confirm" and "Publish". Please refer to the scenario documentation for more information.

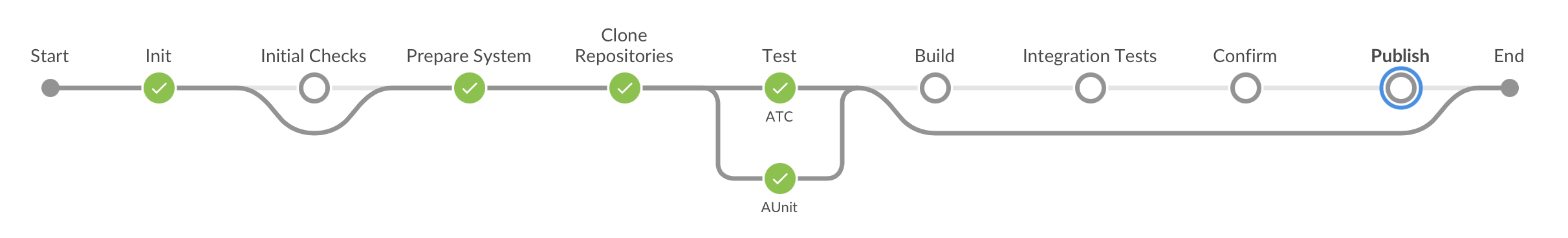

Pipeline Stages¶

The following stages and steps are part of the pipeline:

Please navigate to a stage or step to learn more details. Here you can find a step-by-step example on how to configure your pipeline.